Science Tells You How. Nobody Told You That Wasn't Enough.

From the food pyramid to artificial intelligence — how the boundary between "how it works" and "what you should do" gets crossed without anyone noticing, and what to do about it.

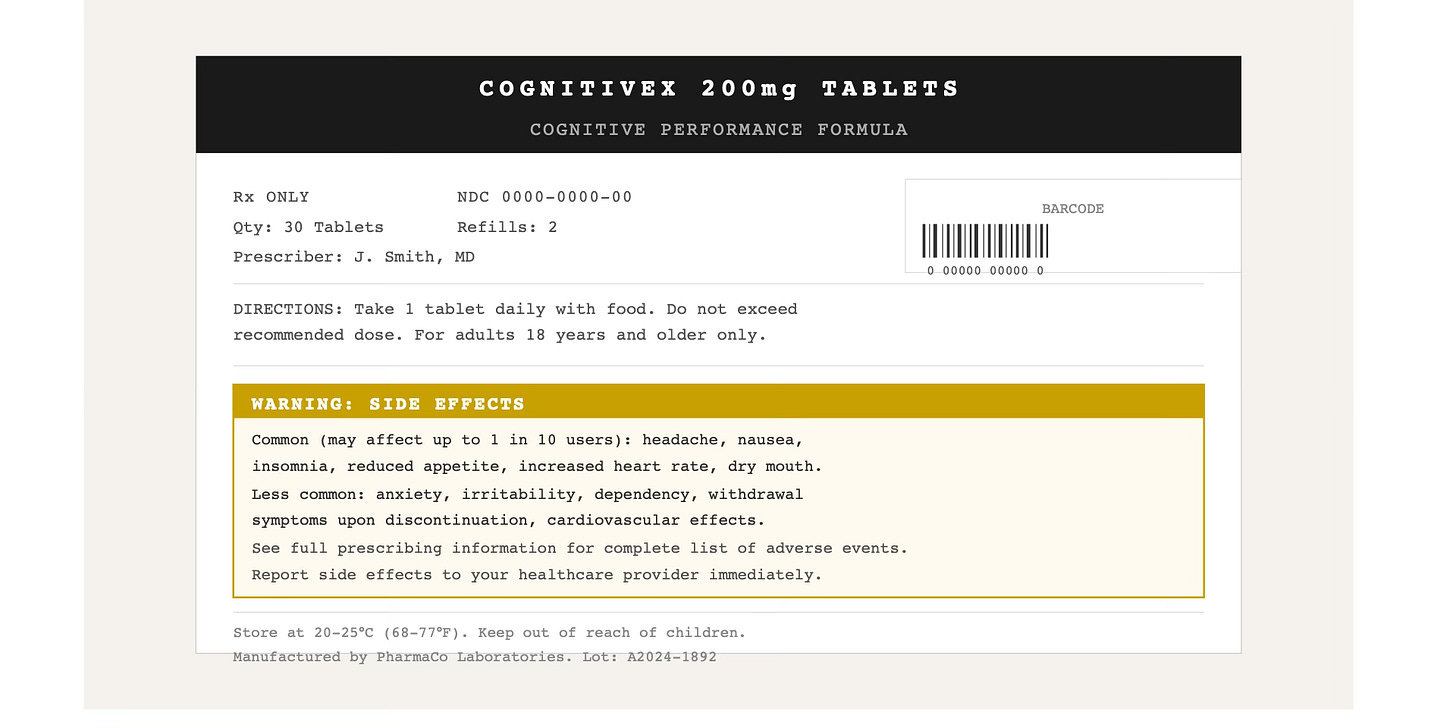

Every prescription drug comes with a side effects section.

Have you ever wondered why? The drug was developed by scientists. It went through clinical trials. Regulators approved it. Doctors prescribe it. And yet — somewhere on the label, in small print — there is a list of things that can go wrong.

Not because the science was bad. Because the science was doing exactly what science does: it solved a specific, measurable problem in a system that doesn’t end at the measurement boundary.

This is not a flaw in science. It is science being honest about its own nature.

The problem starts when we forget to read that honesty carefully.

The Boundary Nobody Mentions

In 1739, the Scottish philosopher David Hume noticed something that has been quietly reshaping philosophy ever since. He observed that no matter how many facts you accumulate about the world — how things are — you cannot logically derive from those facts alone how things ought to be. The gap between description and prescription, between is and ought, cannot be crossed by evidence alone.

This became known as Hume’s is-ought problem. In plain terms: science can tell you how something works. It cannot tell you what to do with that knowledge.

The distinction sounds philosophical. Its consequences are very practical.

When How Becomes What to Do

In 1992, the United States Department of Agriculture released the Food Pyramid — one of the most influential public health documents in history. It was built on decades of research. Researchers had established that saturated fat raises cholesterol levels in the blood. Correct. The how was right.

The translation into what to do — eat less fat, eat more carbohydrates — turned out to be catastrophically wrong. Removing fat from processed foods meant replacing it with sugar and refined starch to maintain palatability. Obesity rates tripled over the following thirty years. Type 2 diabetes rates climbed. Heart disease, the very problem the pyramid was designed to address, continued to rise.

The scientists were not dishonest. The research was real. The boundary crossing happened quietly, exactly as Hume described: the shift from fat raises cholesterol to therefore avoid fat was imperceptible — but consequential.

Who Pays the Price — And Who Doesn’t

This is the question that gets lost in the translation from how to what to do.

The cost is almost never paid at the moment the advice is followed. It arrives later — by the same person, in a part of the system that wasn’t being measured. Or it distributes across a population while the benefit concentrates in the measurable metric. Or it accumulates slowly, invisibly, until the weight of it becomes undeniable.

Consider antibiotics — one of medicine’s greatest achievements. The how (bacteria respond to these compounds) was correct. The translation into widespread prescribing practice contributed to antibiotic resistance — a systemic cost paid not by the individual being treated, but by the collective, across generations.

Consider the opioid crisis. Clinical trials showed opioids reduced pain. Correct. The translation into routine prescribing for chronic pain produced dependency rates that no individual clinical trial was designed to detect.

Consider margarine. Decades of scientific consensus that saturated fat was dangerous made margarine the recommended alternative. Then research established that the trans fats in margarine were more harmful than the butter they replaced. The payer was the person who had switched in good faith, following the science.

In each case: good intentions. Real research. And a cost paid somewhere outside the measurement frame.

Economists have a name for this structural situation: moral hazard. It describes what happens when the person making a decision is insulated from its consequences. The recommender receives the credit when the intervention works. The patient, the consumer, the follower pays when it doesn’t — often years later, in ways that cannot be traced back to the original source. Nassim Taleb captured this in a sharper formulation: skin in the game. Those who give advice without bearing its consequences are operating in a fundamentally different situation from those who follow it.

This is not an accusation. The researcher who published the study moved on to the next study. The committee that designed the food pyramid disbanded. The physician who wrote the prescription cannot track what happens to a patient over twenty years. The structure makes the cost invisible — not the intention malicious.

The Authority That Doesn’t Ask to Be Questioned

The mechanism that makes all of this possible has a name too: appeal to authority — argumentum ad verecundiam in classical logic, one of the oldest documented reasoning errors. It describes the tendency to accept a claim as true because of who said it, rather than because of the evidence behind it.

A PhD attached to a name is an authority signal. A peer-reviewed citation is an authority signal. A panel of experts is an authority signal. These signals are useful shortcuts — they genuinely correlate with reliability much of the time. The problem is that they also bypass the critical evaluation step precisely when it matters most: when the authority is crossing the boundary from how into what to do.

The authority signal has taken many forms throughout history — the priest, the physician, the scientist, the expert panel. Each era produces a new source of answers that feels more reliable than personal judgment. Ours has produced artificial intelligence.

AI systems are trained on vast amounts of human knowledge. They respond in fluent, confident prose. They cite sources, structure arguments, and deliver recommendations with the tone of an expert who has considered all the evidence. Research shows that users form trust in AI based on fluency, tone, and perceived authority — often accepting outputs without verification.

But AI systems cannot bear the consequences of their recommendations. They have no skin in the game. They will not be present when the advice produces an outcome — good or bad. The moral hazard is complete: maximum authority signal, zero accountability for consequences.

This is not a reason to reject AI any more than it is a reason to reject science. It is a reason to apply exactly the same discipline: notice when the authority is crossing from how to what to do, and before following, pause.

The Structure of the Problem

The pattern is consistent enough to have a shape.

Science optimizes for what it can measure, in conditions it can control, over time periods it can fund. These are not arbitrary limitations — they are what makes science rigorous. Isolating variables is how you establish causality. Controlled conditions are how you eliminate confounds. Fixed time periods are how you run studies that finish.

But human beings are open systems. Everything is connected to everything else. The intervention that solves a problem here creates a pressure there. The optimization that improves the measured metric may be quietly degrading something unmeasured. The solution that works in the controlled conditions of a trial may behave differently across decades of real life.

This is not a failure of science. It is the honest structural reality of what science can reach.

The failure — when it happens — is the uncritical translation of how into what to do, without asking: what does this assume? What is outside the measurement frame? What am I not tracking?

The Tool You Already Have

Hume’s observation wasn’t that science is wrong or useless. It was that facts alone cannot tell you what to value, what to pursue, or how to live. Something else is required. And that something else has been available to you all along.

There is a category of knowledge that sits outside what science can reach: what you don’t yet know, but can experience and try. Not what studies show. Not what experts recommend. What actually happens — in your body, in your life, in your experience — when you follow the advice.

This is not anti-science. It is the instrument that completes the picture science cannot finish.

The next time you encounter a well-researched recommendation — backed by studies, endorsed by experts, translated cleanly into a protocol — before you follow it, pause at the boundary. Ask what the research actually measured. Ask what it assumed about you that may not be true. Ask what the measurement frame left out.

Then check it against what you already know. Not what you’ve read — what you’ve lived. For decades, nutritional science declared breakfast the most important meal of the day. Institutions endorsed it. Schools built it into policy. Millions of people who naturally skipped breakfast and felt better for it spent years overriding that signal — eating food they didn’t want, at a time their body wasn’t asking for it, because the recommendation carried scientific authority. Their own experience was telling them something. They stopped listening.

If what is recommended contradicts your lived experience, that contradiction is data — not a sign that you are doing something wrong, but a signal worth examining before you proceed. Only when the recommendation is at least consistent with what you know from your own life does it make sense to try it deliberately and observe what happens.

Is it working?

Not in theory. Not statistically. In your actual experience.

That question is older than science. It is how humanity learned most of what it knows. And it remains the only instrument that can answer what science, by its own nature, cannot.

The important question is not whether something is right or wrong, good or bad — but whether it works.

References:

Hume, D. (1739–1740). A Treatise of Human Nature, Book III, Part I, Section I.

Willett, W.C., et al. (2001). Rebuilding the food pyramid. Scientific American, 288(1), 64–71.

Centers for Disease Control and Prevention. Obesity prevalence data, 1960–2020.

Centers for Disease Control and Prevention. Antibiotic resistance threats in the United States, 2019.

Van Zee, A. (2009). The promotion and marketing of OxyContin: commercial triumph, public health tragedy. American Journal of Public Health, 99(2), 221–227.